[March 26, 2019]

On Humans Interpreting AI and Robot Behavior

A conversation with Professor Nathan Michael, Shield AI’s Chief Technology Officer. This is a continuation of our conversation about Trust and Robotic Systems.

How do humans factor into trust of robotic systems?

Humans trust engineered systems that adhere to performance expectations. If a system works as expected, we tend to trust it. Interestingly, if the system works as designed but in a manner that does not align with expectations, we will tend to distrust the system (that is, until we better understand how the system is designed to work). Robotic systems are viewed similarly. Consequently, trust requires that the human both understand how the system is expected to perform and that the system perform as expected with high reliability.

The concept of establishing operator expectations is particularly challenging when working with resilient intelligent systems that introspect, adapt, and evolve to yield increasingly superior performance levels. We must consider both how to enable the operator to work with the system, as well as understand how the system is improving through experience. Of note is the fact that human expectations, intuition, and understanding do not always translate to optimality. Humans tend to optimize their behavior to conserve effort — based on the innate biological drive to conserve energy — but robots can be built to optimize, maximize, or minimize many different things.

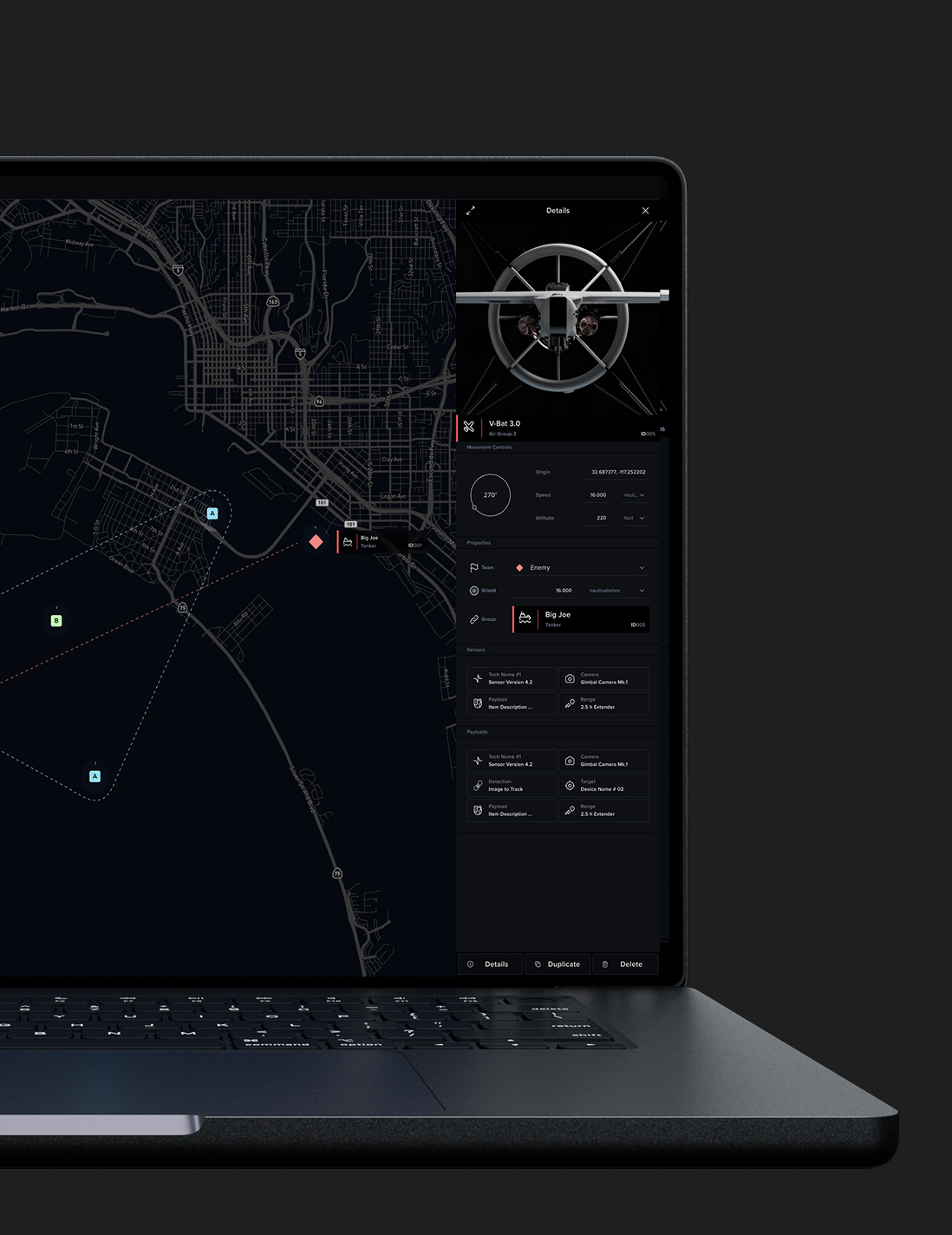

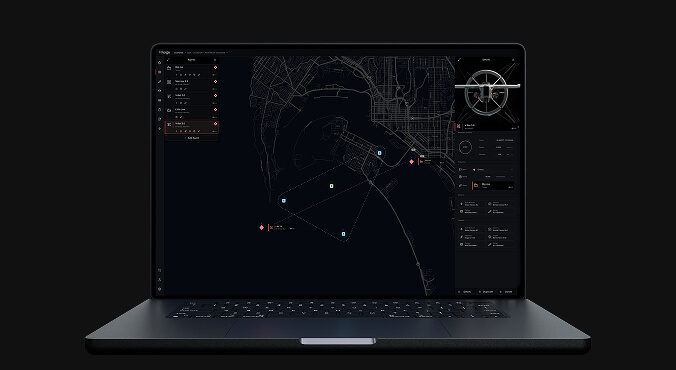

This idea of enabling the operator to understand the performance of the system as well as how it is improving over time is captured through Explainable AI, an active area of research and development. At Shield AI, we leverage concepts arising from this area as well as our familiarity with the customer to design systems that strive to align performance gains and intuition within an operational context so that the user is able to work effectively with the system and establish trust in its performance. Of course, reliability plays a big role here — no one trusts a system that fails. And so we are constantly working to increase system reliability.

Tell us more about human interaction with AI. How can society come to understand AI better though observation?

AI isn’t the first disruptive, complex technology that society has faced. A great historical example of the reaction of society to complex technology is that which occurred following the deployment of cars well over a hundred years ago. Initially, when cars were introduced, people didn’t know how to engage with the technology. There were no crosswalks, so pedestrians didn’t know when and where it was safe to cross streets. Drivers didn’t know what should happen when they met other vehicles at an intersection. Eventually, rules were developed and over time those rules became clearer and clearer. But early on, before rules were established, people had to have faith that cars and their drivers would engage in a reasonable and safe manner. In time, through the establishment of rules and regulations, society at large began to understand what the consequences of having this type of technology were and how it should be regulated and managed.

This idea of faith and trust in complex systems really comes down to the degree to which we understand the complexity, the degree to which we know that that complexity is being managed, and the degree to which society at large has structures and mechanisms for managing that complexity. Once those have been addressed, then we start to move more completely towards trust.