[December 25, 2018]

Shield AI Fundamentals: On Human-Computer Interaction

A conversation with Ali Momeni, Senior Principal Scientist and Director of User Services & Experience at Shield AI.

Human interaction design – how do you refer to it?

The terms that are often used are HCI or CHI for human computer interaction, and HRI for human robot interaction.

Which phrasing do you prefer?

It really depends on what community you’re speaking to. If you’re speaking to a roboticist — or as is the case for Shield AI, our customers — I think that HRI makes a lot of sense. If you’re among colleagues specializing in this field, such as at the CHI Conference, then HCI or CHI probably makes more sense. You tend to see HCI used to refer to more general aspects of human computer interaction. For example, areas such as “social computing” or “smart homes” are quite active in HCI at large, but less applicable to our work at Shield AI.

What does human computer interaction look like at Shield AI?

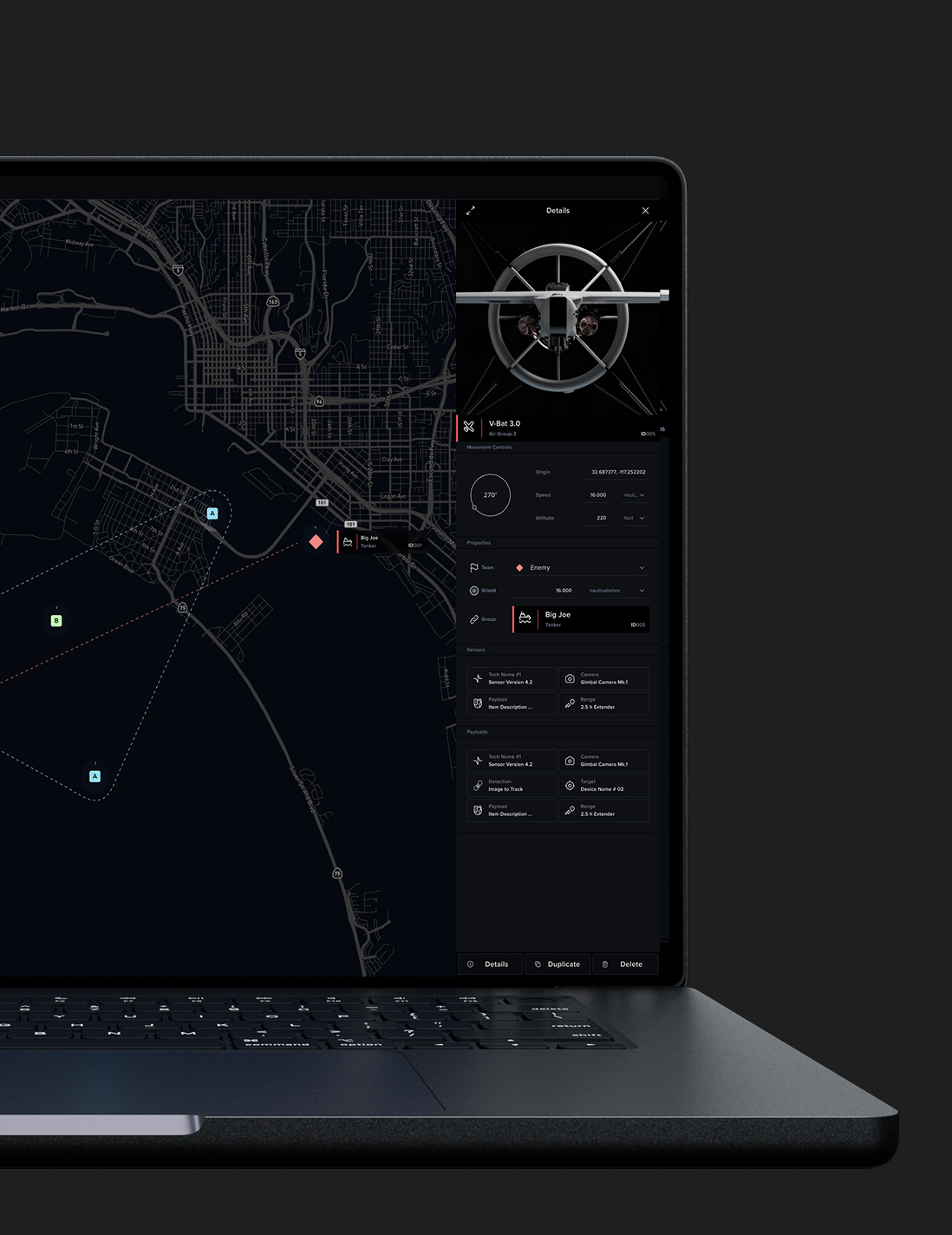

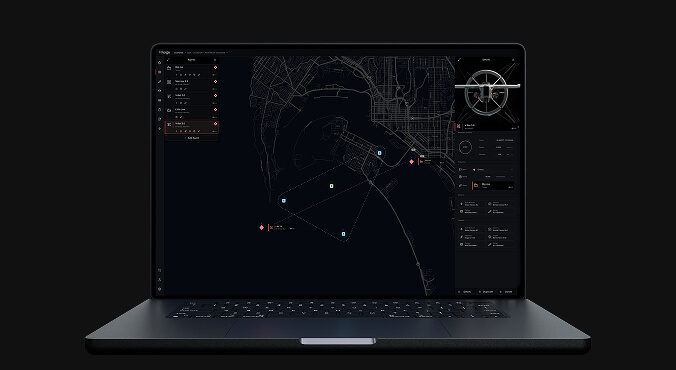

It’s about creating seamless experiences with very complex systems. In our case, it’s also about navigating the many ways a robotics system is put into contact with a human being, and how those interactions can enable and empower the user.

In many scenarios for HCI, the system is comprised of a screen and a keyboard, or a mobile device; but we have a much more complex ecosystem here at Shield AI. Our ecosystem of interactions includes the user’s sight line, voice commands, communication other people, and communication with the robot — both on- and off-screen.

Why is human computer interaction important at Shield AI?

Human computer interaction is so important at Shield AI because of our values and our mission. We are people-centric. Our mission is not simply to push the state of artificial intelligence, it’s to make artificial intelligence useful to people and to protect people. All the ‘peoples’ in those phrases — that’s where we come in as human computer interaction practitioners.

In that way, I would point also to whom we consider the user. In HCI and HRI you’re always imagining what the user does or what the user wants or how the user feels. At Shield AI, we have many types of users to consider. We have traditional users, but we also have users who are decision makers. They might never touch a robot, they might never touch an interface, but we need to train them to understand our tools. So the complexity of the range of users here is really different and really interesting.

Do you have an example of a design decision that your team has made that has shifted the way the product is used?

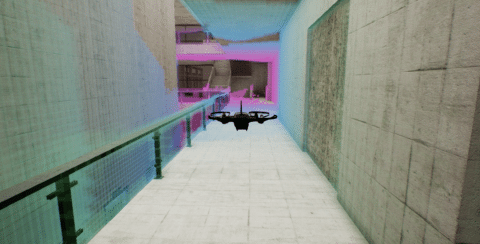

Yes. I think the best example is simulation. We’ve been using simulation here for a while, but the role of simulation has been somewhat limited. In the past, only part of the autonomy stack relied on simulations, primarily the planning and controls team. We have recently expanded the use of simulation and visualization to serve many more users, including external ones.

We look to the video game industry for its high levels of fidelity and engagement. Our engineers who were previously relying on simple visualizations are now observing the robot in a photo-realistic environment. We can gain tremendous insight from this.

On the customer-facing side, features such as training are going to be experienced in the same simulation and visualization system, one that is based on the same tools that our engineers use internally. So, in a way, we are bridging the internal and external users with this world of simulation. That use of simulation is allowing us to think much more ambitiously about how our customers will engaged with our products.