[August 30, 2019]

What is Coordinated Exploration?

What is coordinated exploration?

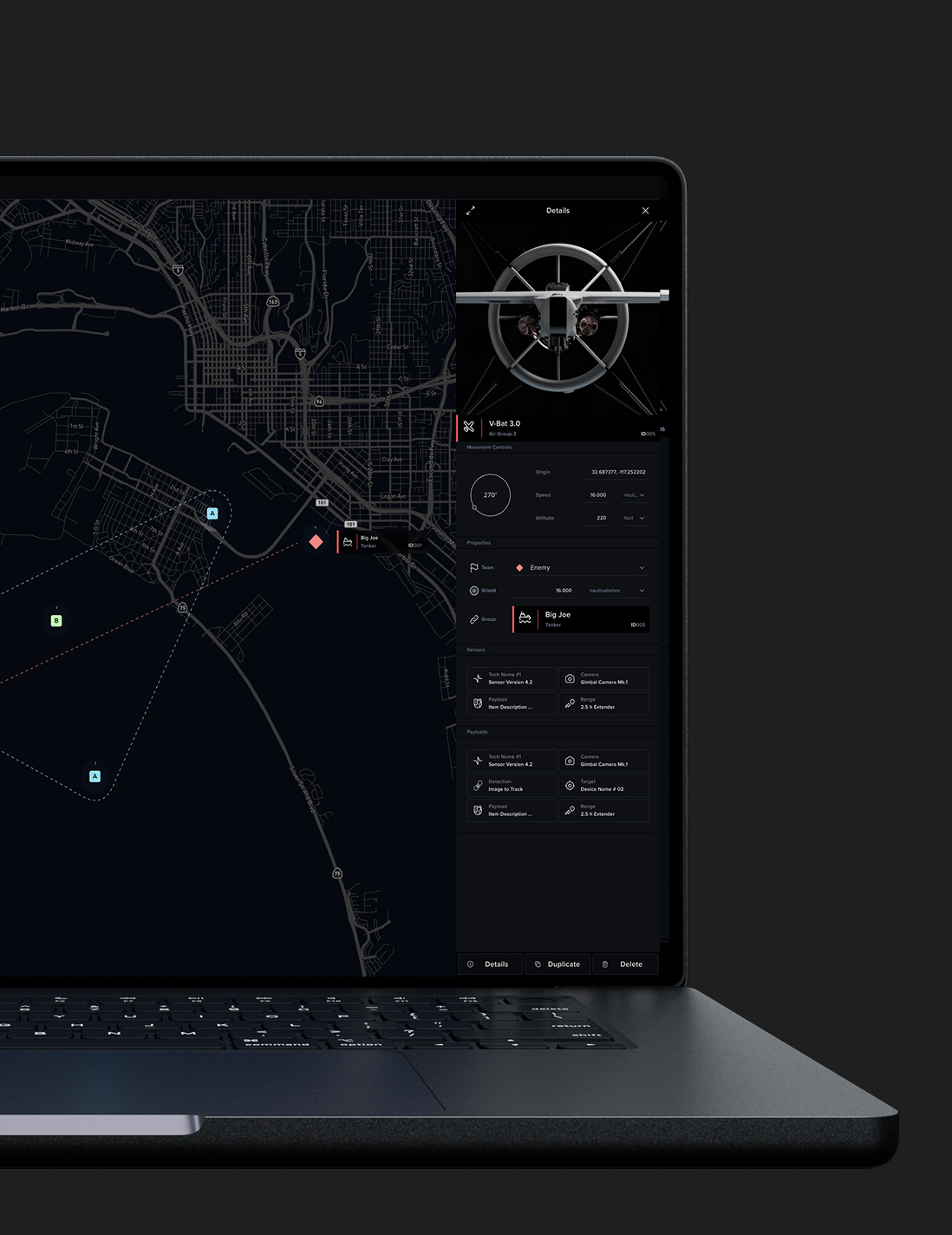

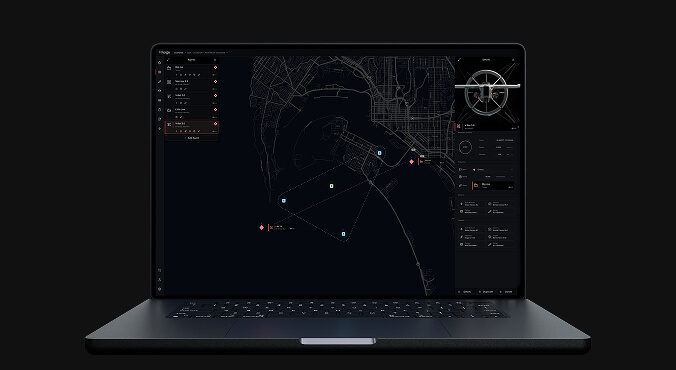

In the context of Shield AI, coordinated exploration corresponds to the deployment of multi-robot systems in order to explore an environment. These multi-robot systems collectively develop a structural model of that environment by moving through and navigating around it.

Coordinated exploration refers to how the robots decide how to move through an environment together in order to model that environment. They make decisions of where to go and how to navigate. These kinds of considerations will vary based on the performance of the individual robots, how well the robot’s sensors are able to perceive the environment, and environmental conditions such as the structure, the complexity and the clutter of the robot’s surroundings. As the system flies through the environment, the robots are going to communicate with each other at regular intervals if possible, share information and collectively build up a distributed model that they can use to make further decisions regarding their coordinated exploration.

There are different ways coordination can play a role in enabling multi-robot systems to work together to better understand the environment in which they’re operating and build collective, consistent models. Often, we formulate coordinated exploration to quickly explore complex environments. Sometimes those strategies will include every robot trying to work together to gather as much data to build up a model as quickly and as accurately as possible. Other times, we will formulate coordinated exploration to emphasize different objectives. For example, we might decide that it’s more important to build up an accurate model while transferring data back to an external observer. So therefore some of the agents within those systems may need to change their role to communicate to an external observer. And this may sacrifice some of the speed of exploration.

How does coordinated exploration relate to reinforcement learning?

The basic idea behind reinforcement learning is that the system learns to identify the best possible behavior based upon the presence of some type of reward. Within the context of coordinated exploration, individual robots are working together to build a model of the environment, share that model, and ensure that it is consistent. They’re working together to collectively decide where they should go in order to maximize the accuracy and expansiveness of their model. The overall goal of their exploration is to figure out where to go in order to learn the most about the environment. So, extending this to the concept of reinforcement learning, the notion of a reward corresponds to the amount of information that can be gathered about the environment.

And does the size of the environment that they’re exploring or the number of agents impact the system’s ability to do this?

Yes, both of those factors will impact exploration. With regard to the degree to which exploration is reliant on the size of the environment, it comes down to the informativeness of the environment.

In a large environment, there may be very little information; a large environment might be somewhat boring because a robot can move very quickly through it and get a good enough idea of the nature of that environment. A great example would be a giant open room with very little clutter. The robot could quickly enter, look around, perceive the room and leave. But if the robot entered into that same giant room but now there’s a tremendous amount of clutter — tables, chairs, piles of all different kinds of objects all over the place — then it would have to explore across that room in order to be able to see all that is there. That means that the system now has to invest more time, more effort, more energy in order to look around.

From there, how many robots are required to effectively explore an environment will depend on the robots’ sensors, processing, and flight endurance. If you need to explore a complex environment quickly, then you need more robots. A multi-robot system will determine how many robots should be exploring an environment based on the system’s current understanding of the environment’s size and complexity as well as the robot team’s capabilities and state.