[July 19, 2018]

Robotic Teams and Reliability

You’ve conducted extensive research on man-machine collaboration. Why have you chosen this focus?

The concept of man-machine collaboration is very intuitive; we’re pairing a set of robotic systems with one or more people. The barebones tech behind connecting man to machine and machines to other machines is well-studied. I think the hard part about effective man/machine collaboration, especially in terms of small deployments of one to ten robotics systems, is establishing and keeping trust.

We, as human beings, don’t fully trust autonomy, and we shouldn’t without evidence that the autonomy is effective and predictable. That kind of trust has to be earned. Our company’s mission has such a social impact–to protect human beings–that our mission requires an even more rigorous approach to all aspects of trust in man/machine collaboration than most other robotics companies.

My approach to the issue of trust in robotics in man/machine collaborations has focused on five research vectors: 1) provable reliability, 2) reliability gradients, 3) transparent operation, 4) intuitive feedback and control, and 5) security. These research areas cover a vast swath of the concepts required to pursue mission-critical, real-time, and effective cyber-physical systems.

Can you elaborate on the concept of provable reliability? What does it refer to? How does it differ from gradients of reliability?

Provable reliability refers to formal methods and mathematical techniques used to exhaustively ensure attributes of the autonomous system are correct. These techniques are often applied offline before the robots are deployed in a mission. You focus your analysis on things like safe operation of the robotics system with each other and with human operators. You analyze the ability of the autonomous system to perform missions, such as search, to be thorough and complete.

Provable reliability tends to be very computationally intense, and it almost always has to be done offline, not while the mission is ongoing. For online systems that must ensure correctness during missions, especially those that deal with large state spaces with lots of variables and really intense computation, you often have to make trade-offs between full reliability and time. So, a key part of the research is understanding when this trade-off has to take place. We want to provide comprehensive evidence to human operators, and our robots also need to be able to perform the mission. We have to help characterize what the tradeoffs mean and what the system can actually be relied upon to do every single time the robotic system is used.

We’re not only working toward trustable systems, but we’re also working toward trustable robotics that can scale to arbitrary sensors, actuators and numbers of men and machines in the collaboration. This is very important to us.

How does transparent operation relate to intuitive feedback and control?

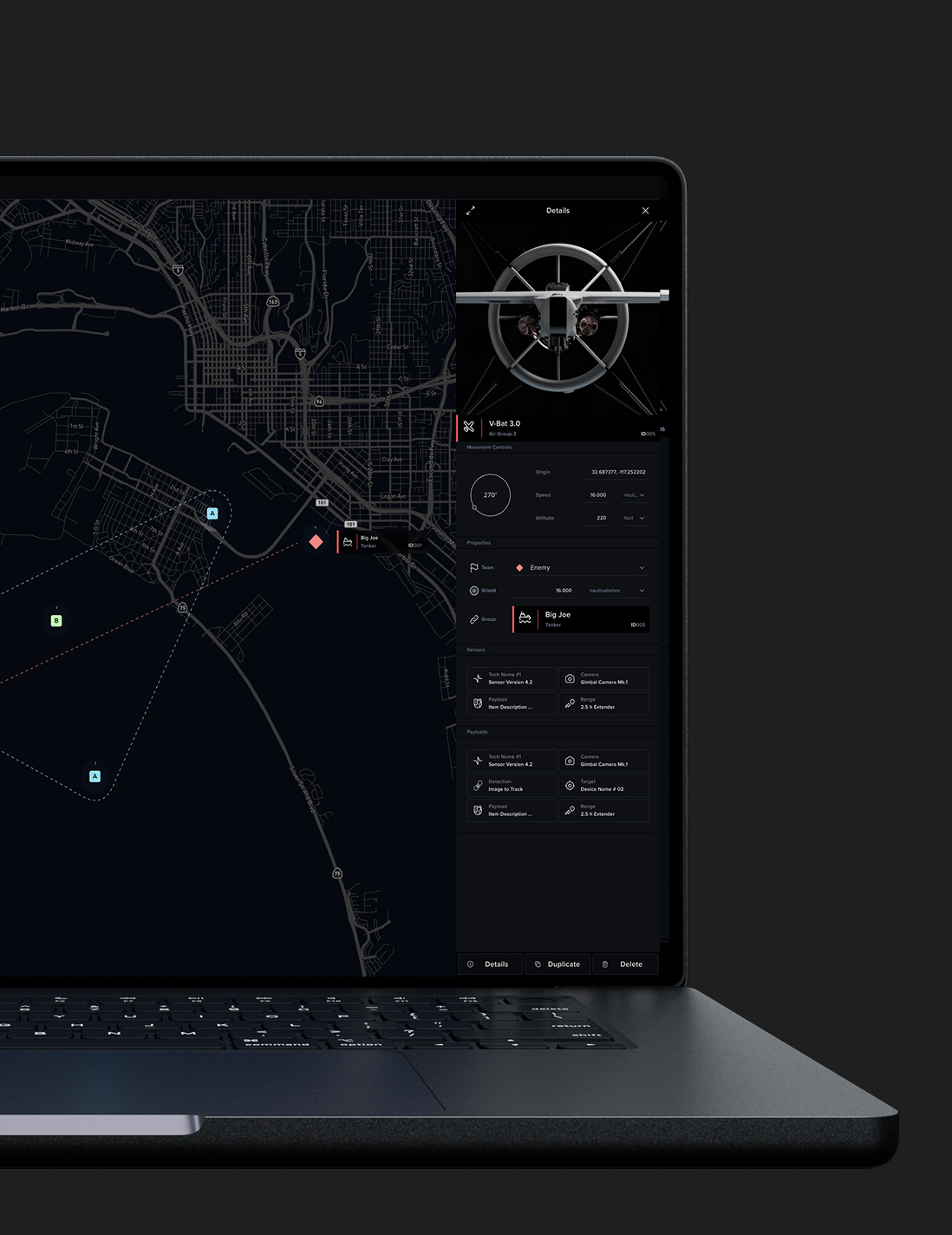

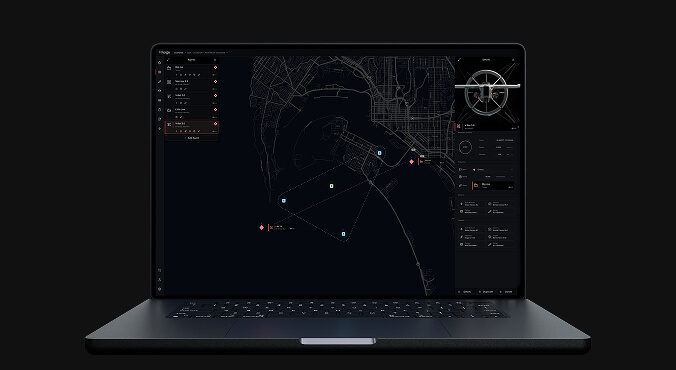

Transparent operation is a key component of establishing trust, and in our approach to autonomy, it covers multiple topics. A user of our types of robotics needs transparency in the information flow, in the computation, in the movement characteristics of the robotic system, and in the human-directed operation of the system.

Basically, transparent operation refers to the ability of the customers and the users to understand what’s going on. We need to convey what the robotic system is doing at all times, and this involves both offline and online transparency mechanisms. Intuitive feedback and control refers to the very core of the man/machine interaction. How do you command the robotic system? How do you know if it is doing what it is supposed to do? We need highly intuitive mechanisms for command, control, and feedback from the system to let us know that we can still trust the system.

No one wants to be next to a robot that is behaving erratically. To establish trust, you need to not only be provably correct and safe, you also need to act safe and reliable. Think of it in terms of walking next to a person down the sidewalk. If you are walking next to someone who is strolling confidently in a straight line, you are less likely to feel uncomfortable or unsafe walking next to or behind that person. However, if the person begins veering all over the sidewalk and bumping into you constantly, you are probably not going to trust that person at all. You may stop your movement entirely, wait for that person to leave the area, and delay doing what you had planned on doing just to be in a situation in which you felt safer.

This is where the line between transparency and intuitive feedback and control really starts to blur. It’s where you can clearly see how trust can be conveyed through movement itself. It also shows the types of transparency we’re trying to apply here from the data level up to logic and into the man/machine collaboration itself.

The fifth area of trust you mentioned was security. What is unique about security in autonomous systems?

Modern robotics development has been focused on feature development over security for a long time. We of course tackle the obvious problems in security–things like encryption of data to disk and over the wire to human operators. But if we hope to have any chance of establishing real trust in man/machine collaboration, we also have to tackle the problem of security from the ground up. We have to contract specialists to perform code audits of our software stack from the middleware to the implemented autonomy systems. We need to have hardware specialists who can analyze our drivers and maybe even our physical systems to prevent malicious attacks. We need to analyze coercive data in analytics that may lead machine learned systems to bad results and decisions. We need to look at the problems of insider threats within our robotics networks, our data backends, and amongst and between our users.

We must look ahead. We must develop robots with the understanding that we need transparent systems for our users because our users have to understand what our systems are doing and why. But transparency for our users shouldn’t mean giving malicious actors and adversaries full transparency to that same system and data. The days of broadcasting production systems in plain text should be behind us, but encryption alone will not protect our users. It is incumbent on us and our company to continually address security, investigate old and new attack surfaces, and protect our users and ultimately their trust in our systems.